International Conference on Robotics and Automation, 2021

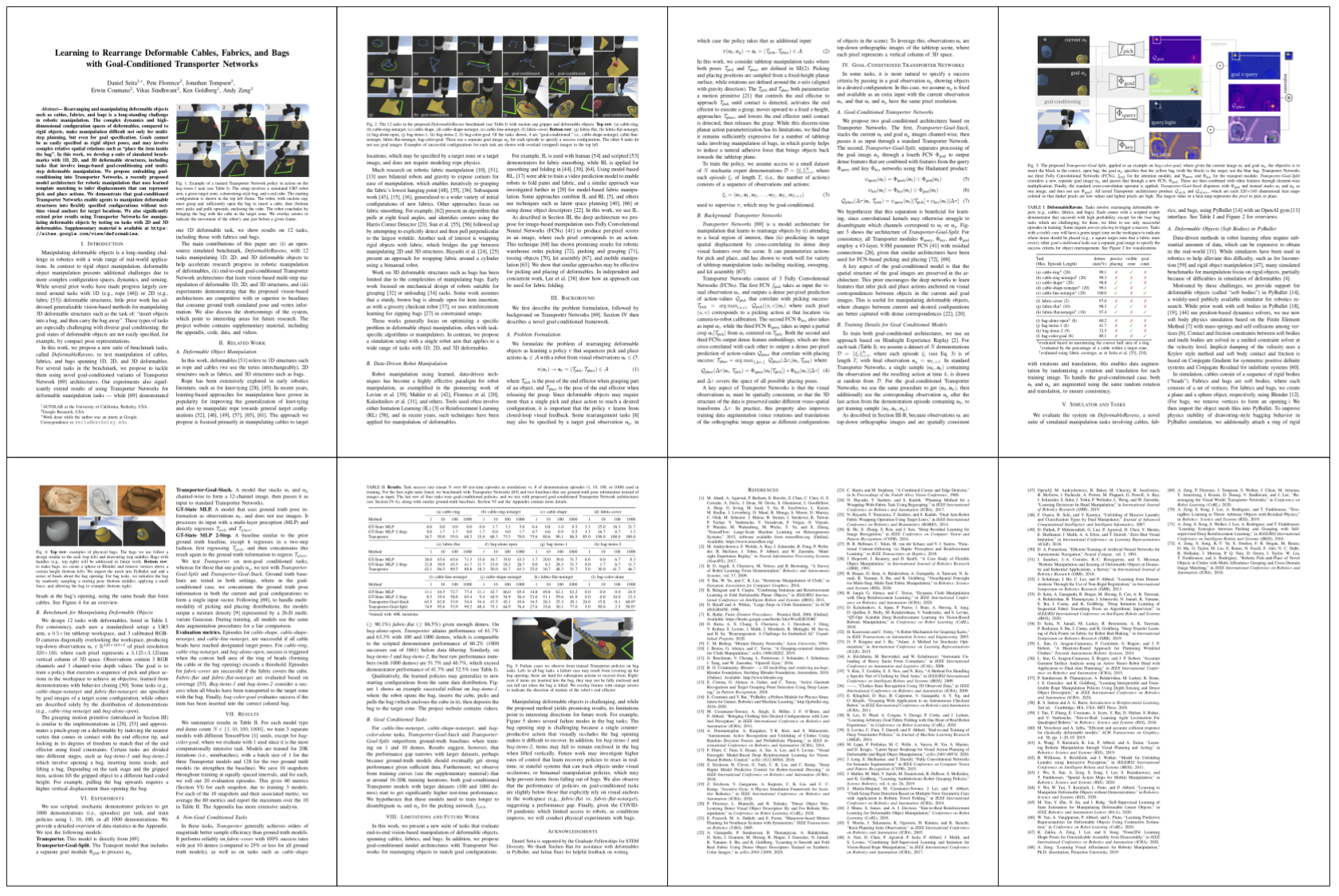

Abstract. Rearranging and manipulating deformable objects such as cables, fabrics, and bags is a long-standing challenge in robotic manipulation. The complex dynamics and high-dimensional configuration spaces of deformables, compared to rigid objects, make manipulation difficult not only for multi-step planning, but even for goal specification. Goals cannot be as easily specified as rigid object poses, and may involve complex relative spatial relations such as "place the item inside the bag". In this work, we develop a suite of simulated benchmarks with 1D, 2D, and 3D deformable structures, including tasks that involve image-based goal-conditioning and multi-step deformable manipulation. We propose embedding goal-conditioning into Transporter Networks, a recently proposed model architecture for robotic manipulation that uses learned template matching to infer displacements that can represent pick and place actions. We demonstrate that goal-conditioned Transporter Networks enable agents to manipulate deformable structures into flexibly specified configurations without test-time visual anchors for target locations. We also significantly extend prior results using Transporter Networks for manipulating deformable objects by testing on tasks with 2D and 3D deformables.

Website Updates

06/18/2023: The arXiv edition has been updated with a description of physical experiments.

06/11/2023: Code for physical experiments released here.

03/01/2023: Added clarification on simulation results and a bar chart.

02/24/2023: Added four more physical experiment videos and an evaluation metric.

02/22/2023: The physical experiment videos have been improved for visual clarity.

02/21/2023: We've added physical cable manipulation experiments. We are actively adding more.

10/23/2022: Added some more examples of goal-conditioning for Cable-Line-Notarget.

05/30/2021: Finalized the website for the ICRA 2021 paper.

Paper

Latest version (June 18, 2023): (arXiv link here). The arXiv link is the latest version and includes the supplementary material.

Team

3-Minute Summary Video (with Captions)

Physical Experiments

Context: we originally wrote this paper in 2020 during the middle of COVID-19, and due to the circumstances, we (virtually) presented our ICRA 2021 paper with simulation-only expeirments. In early 2023, we returned to this project to demonstrate how Goal-Conditioned Transporter Networks could be applied on physical hardware. Below, we show experiments inspired by the "Cable-Line-Notarget" task in our DeformableRavens simulation benchmark. For the cable, we use this chain with 13mm balls attached together, painted with a uniform color.

Experiment Details: we collect 30 demonstrations and use 24 of them for training (with the other 6 to monitor validation loss). We also collect 20 separate images of the cable on the workspace to be used as "goal images" at test time. For test-time execution, we use the snapshot after 20K iterations of training GCTN, which is the same as used in simulation, where one "iteration" is a batch size of 1 item. We use a Franka robot and move it using frankapy. The Franka uses a mounted Azure Kinect camera on its end-effector; it returns to a top-down home pose after each action to query updated images of the workspace. We do not use specialized grippers. To initialize the setup, the human operator lightly tosses the cable on the workspace. We limit the number of robot actions to 10 per episode (i.e., rollout).

Changes from Simulation: enabling GCTNs for physical cable manipulation requires a few adjustments from simulation. Perhaps most critically, we cannot assume our gripper perfectly grasps the deformable. As a heuristic, we take a local crop centered at the a picking point and compute the best fit tangent line, and then have the gripper's finger tips open in the direction perpendicular to that tangent line. Second, to ease training, we currently use a binary segmentation mask as our images, instead of RGB and depth.

Quantitative Evaluation Metric: we report cable mask intersection over union (IoU). We compute this at each time by considering the current and goal cable mask images (which are passed as input to the GCTN) and computing the IoU. We consider a test-time episode rollout as a success if the cable mask overlap ever reaches above some threshold within the 10-action limit. Our threshold is currently 0.25, which we found to correlate with strong qualitative results but which is also forgiving of certain physical limitations. For example the robot will have some imprecision when picking and placing due to slight errors in calibration.

Videos of the Trained GCTN at Test Time

All videos here are test-time rollouts from the same GCTN policy trained on 24 demonstrations, and are at 4X speed. In all the videos, we overlay (to the right) the current image of the workspace, and below it, the goal image. The current image changes after each action while the goal image remains fixed. For visual clarity we overlay RGB images in the videos, but the GCTN takes as input the current and goal cable binary mask images.

The following two videos show successful examples of rollouts. The GCTN was able to increase the cable mask IoU from 0.0 to 0.278 in the first video, and from 0.0 to 0.308 in the second video.

The following two videos show two more successful rollouts. The GCTN was able to increase the cable mask IoU from 0.0 to 0.454 in the first video, and from 0.052 to 0.417 in the second video. The second video shows that the policy has a few suboptimal actions, but is able to recover.

Here are two more successful rollouts (as judged by cable mask IoU). The GCTN was able to get the cable mask IoU from 0.083 to 0.313, and from 0.048 to 0.265 in these two videos. In the first video, it also shows that the GCTN is able to manipulate the cable even when it is partially outside the workspace (see 1:00) which did not happen in the training data.

The following two videos demonstrate the challenge of precisely getting a cable to align with a goal. Even though the robot might qualitatively move the cable to a "reasonable" goal configuration, the cable mask IoU only gets to 0.131 and 0.013 at the end of these respective videos.

Limitations: First, there can be grasp failures, particularly when the cable self-overlaps, as shown in the first video below (0:36 to 0:38). Interestingly, the GCTN model was still able to make the cable end up in a nice configuration as the next time step resulted in a different picking (and thus placing) point, and the final cable mask IoU is 0.333 which exceeds our success cutoff. Second, some failures are due to poor predictions from the GCTN model. For example, the second video (which has a challenging starting cable) shows a 10-action sequence when the model picks at suboptimal points (e.g., at 1:06) or place at the "wrong" cable endpoint location (e.g., at 1:56) in addition to a grasping failure at 0:06.

Videos -- Scripted Demonstrations

These are screen recordings of the scripted demonstrator policy for tasks in the DeformableRavens simulation benchmark. The bag tasks have screen recordings slightly sped up, and we compress some GIFs to reduce file sizes.

Cable-Ring

Cable-Ring-Notarget

Cable-Shape

Cable-Shape-Notarget

Cable-Line-Notarget

Fabric-Cover

Fabric-Flat

Fabric-Flat-Notarget

Bag-Alone-Open

Bag-Items-1

Bag-Items-2

Bag-Color-Goal

Videos -- Learned Policies (Bag Tasks)

These are screen recordings of learned policies when deployed on test-time starting configurations. For some GIFs, to speed them up and reduce file sizes, we remove frames corresponding to pauses between pick and place actions.

Bag-Items-1. (Zoomed-in) Transporter trained on 100 demos. It successfully opens the bag, inserts the cube, and brings the bag to the target.

Bag-Items-2. Transporter trained on 1000 demos. It successfully opens the bag, inserts both blocks, and brings the bag to the target.

Bag-Color-Goal. Transporter-Goal-Split trained on 10 demos. The goal image (not shown above) shows the item in the red bag, in which the policy correctly inserts the block.

Videos -- Goal Conditioning in Depth (Cable-Line-Notarget)

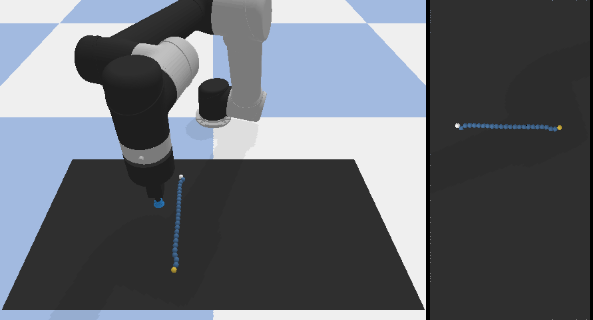

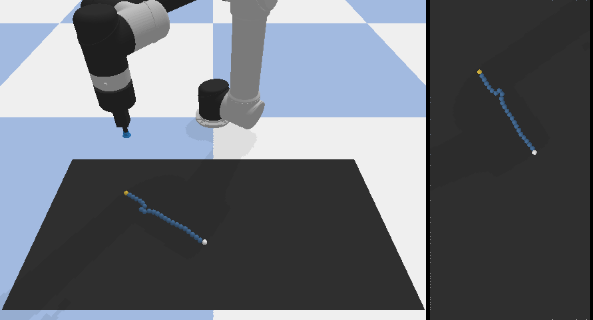

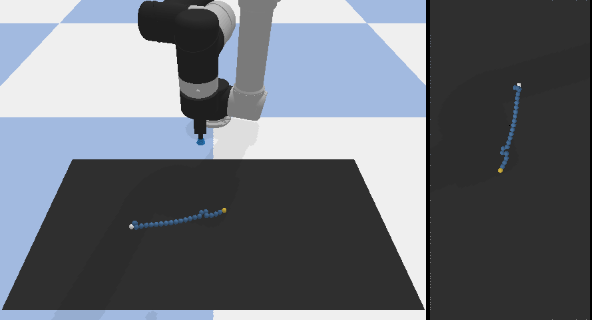

Test-time rollouts (on Cable-Line-Notarget) of one GCTN policy trained on 1000 demonstrations. The cables start from the same starting configuration, and the only thing that changes is the goal image. This shows the utility of a goal-conditioned policy.

For clarity, we show the goal images below each of the videos. The goal images consist of two parts: the scene as viewed from the front camera (to the left, purely for visualization) and the top-down RGB image (to the right) which is actually supplied to the GCTN as input at each time step. (GCTN also uses the corresponding top-down depth goal image as input.) The GCTN policy achieved a success in these three examples by triggering the tolerance threshold to be close to the goal.

Cable-Line-Notarget, Goal 1.

Cable-Line-Notarget, Goal 2.

Cable-Line-Notarget, Goal 3.

Videos -- Limitations and Failure Cases

These are screen recordings showing some informative failure cases, which may happen with scripted demonstrators or with learned policies. These motivate some interesting future work directions, such as learning policies that can explicitly recover from failures.

Bag-Items-1. Possibly the most common failure case. Above is the scripted policy, but this also occurs with learned Transporters. The cube is at a reasonable spot, but falls out when the robot attempts to lift it.

Bag-Items-1. Failure with a learned Transporter policy (trained with 10 demos). The policy repeatedly attempts to insert the cube in the bag but fails, and (erroneously) brings an empty bag to the target.

Quantitative Results (Updated 03/01/2023)

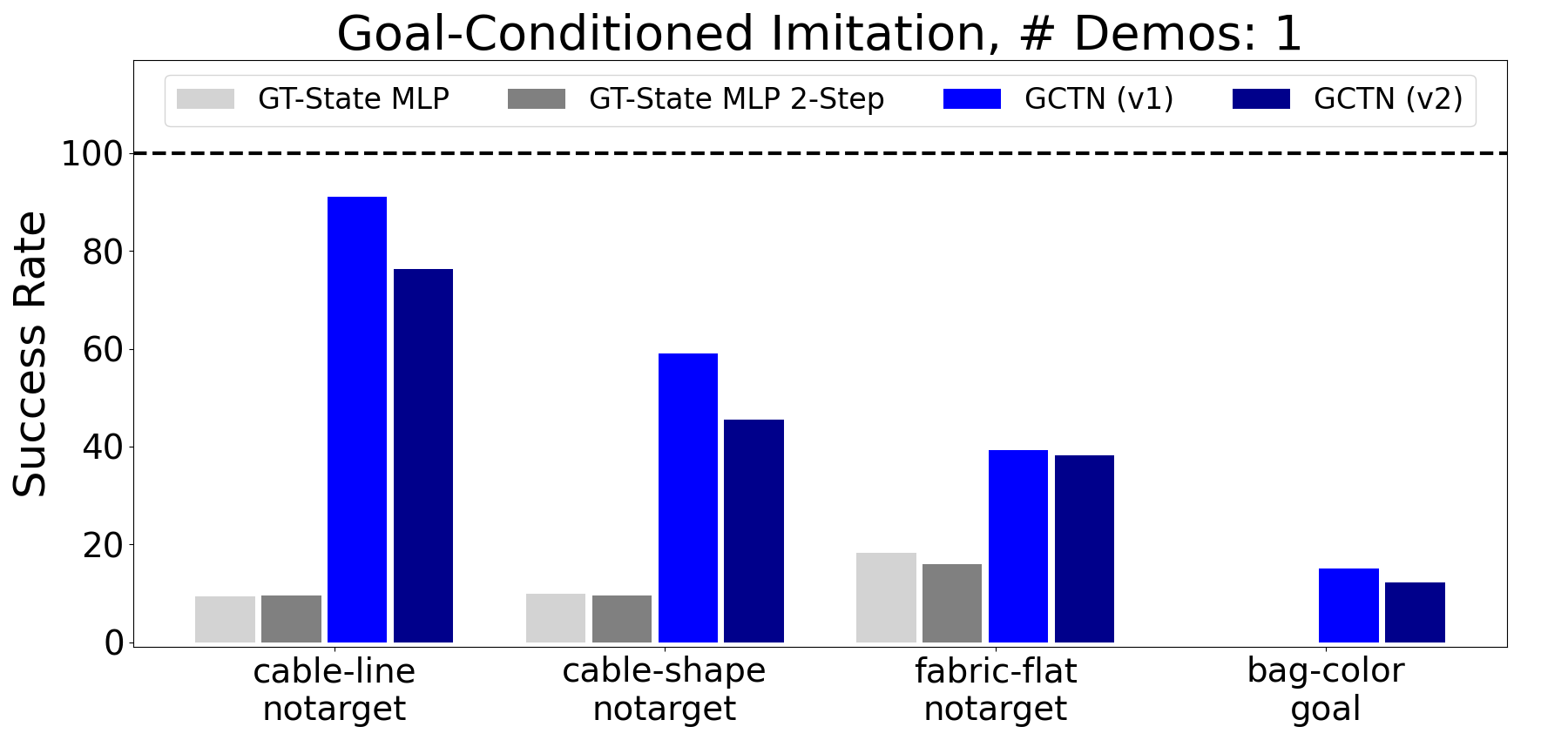

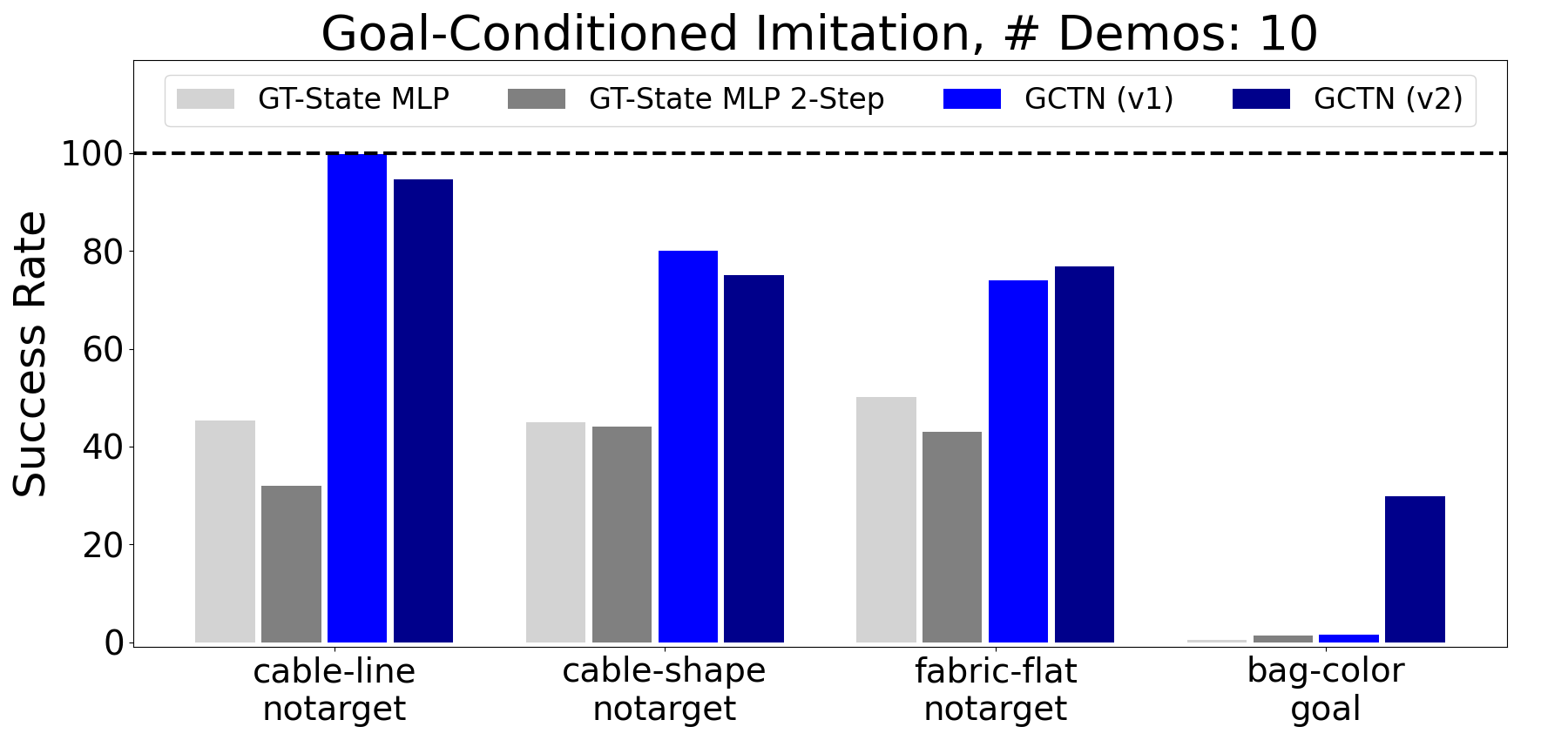

In early 2023, we found a bug in how we were processing the goal image data into both variants of goal-conditioned Transporters ("Transporter-Goal-Stack" and "Transporter-Goal-Split"). This bug does not affect any of the other baselines, nor does it affect the vanilla Transporter networks. Fixing the bug results in improved performance for GCTNs for most of the tasks and variants. For fairness, we also retrained the baselines of GT-State MLP and GT-State MLP (2-step), which got similar results as earlier. Below are bar charts showing the updated performance results for 1 and 10 demonstrations. We also see that the baseline version of "Transporter-Goal-Stack" is slightly better now. For clarify we can refer to "GCTNs" as referring to either of the two goal-conditioned Transporter models. In the below charts, GCTN (v1) refers to Transporter-Goal-Stack, and GCTN (v2) refers to Transporter-Goal-Split. As of June 2023, the latest arXiv version has these updated results.

Code

Here is the GitHub link: https://github.com/DanielTakeshi/deformable-ravens. If you have questions, please use the public issue tracker. I will try to actively monitor the issue reports, though I cannot guarantee a response.

Demonstration Data

These are zipped files that contain demonstration data of 1000 episodes. These are used to train policies.

Cable-Ring --- (LINK (4.0G))

Cable-Ring-Notarget --- (LINK (3.9G))

Cable-Shape --- (LINK (4.1G))

Cable-Shape-Notarget --- (LINK (4.1G))

Cable-Line-Notarget --- (LINK (3.3G))

Fabric-Cover --- (LINK (1.6G))

Fabric-Flat --- (LINK (2.2G))

Fabric-Flat-Notarget --- (LINK (2.2G))

Bag-Alone-Open --- (LINK (2.5G))

Bag-Items-1 --- (LINK (2.2G))

Bag-Items-2 --- (LINK (2.8G))

Bag-Color-Goal --- (LINK (2.2G))

Block-Notarget --- (LINK (1.0G))

These are zipped files that contain demonstration data for 20 goals. These are only used for the goal-conditioned cases to ensure evaluation is done in a reasonably consistent manner.

Cable-Shape-Notarget --- (LINK)

Cable-Line-Notarget --- (LINK)

Fabric-Flat-Notarget --- (LINK)

Bag-Color-Goal --- (LINK)

Block-Notarget --- (LINK)

To unzip, run tar -zvxf [filename].tar.gz. Some of the data files will unzip to different file names, since we changed some task names for the purpose of the paper (while keeping the code and data with the original task names). Specifically, (1) the three "fabric" tasks are referred to as "cloth", (2) "bag-items-1" and "bag-items-2" are referred to as "bag-items-easy" and "bag-items-hard", and (3) "block-notarget" is referred to as "insertion-goal".

The demonstration data should be zipped to data/ and the goals data should be zipped to goals/.

BibTeX

@inproceedings{seita_bags_2021,

author = {Daniel Seita and Pete Florence and Jonathan Tompson and Erwin Coumans and Vikas Sindhwani and Ken Goldberg and Andy Zeng},

title = {{Learning to Rearrange Deformable Cables, Fabrics, and Bags with Goal-Conditioned Transporter Networks}},

booktitle = {IEEE International Conference on Robotics and Automation (ICRA)},

Year = {2021}

}

Acknowledgements

Daniel Seita is supported by the Graduate Fellowships for STEM Diversity (website). We thank Xuchen Han for assistance with deformables in PyBullet, and Julian Ibarz for helpful feedback on writing.